Cloud-Native Security Practices: Governing AI Agents and Zero Trust

Cheta Pandya

The perimeter model of enterprise security didn't slowly erode. It collapsed the moment production workloads started spinning up ephemeral AI agents, container fleets, and automation scripts across multi-cloud accounts simultaneously. Cloud-native security practices have had to adapt fast, and most organizations are still catching up.

What makes 2026 genuinely different from prior years is that the threat surface is no longer primarily technical. It's behavioral. AI agents act without waiting for human approval. That changes what 'securing your environment' actually means in practice. Especially the advent of OpenClaw and other such local AI agents is causing organizations to redefine cloud security.

Because many such agents are used in virtual environments, security becomes crucial. This post covers what enterprise teams need to address right now,

- Agentic attack surfaces

- A reshaped shared responsibility model

- Zero-trust enforcement for machine identities

- Cloud security and data governance frameworks.

However, it's not a vendor overview but a comprehensive framework to consolidate cloud security.

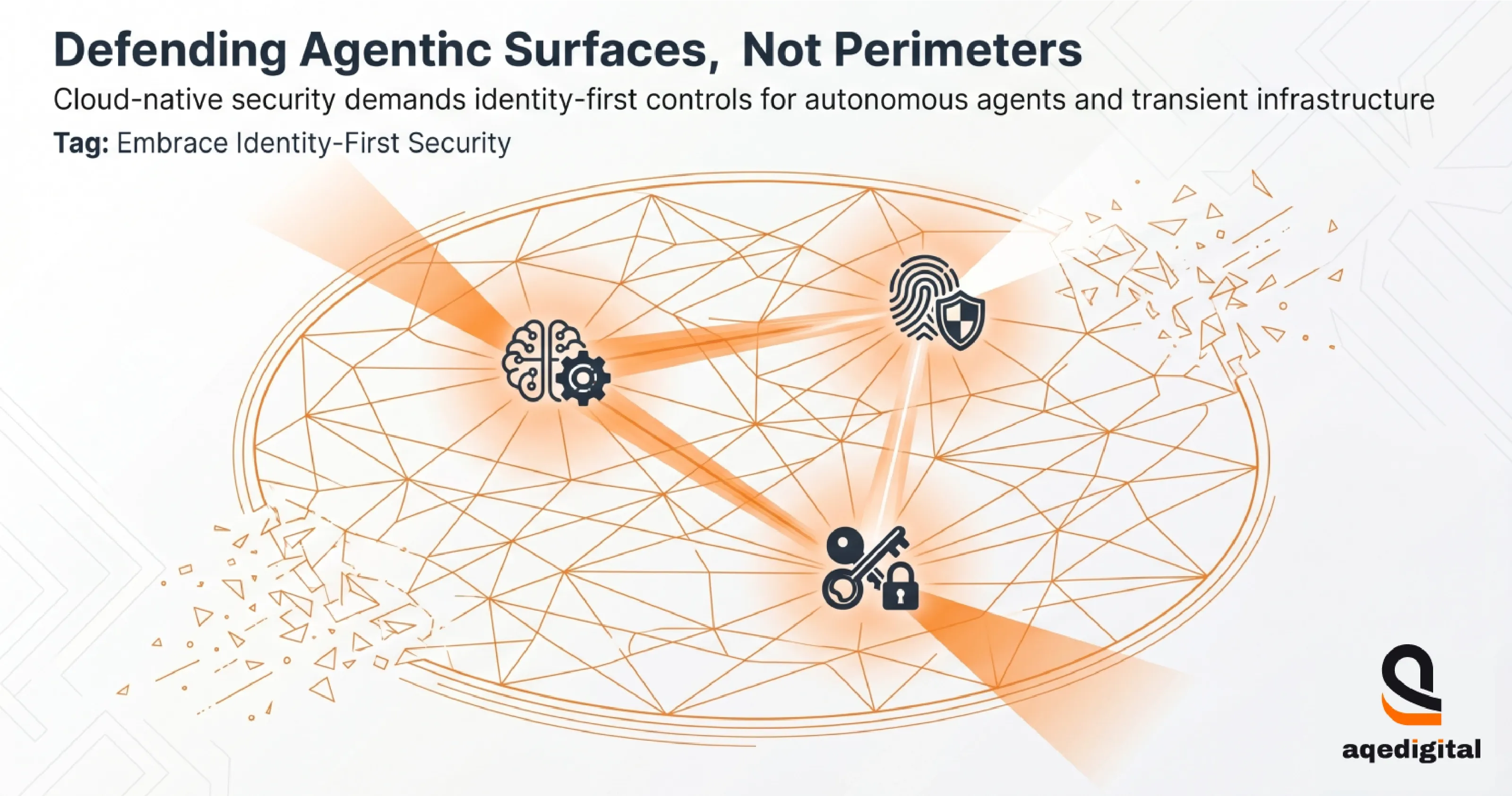

Cloud-Native Security Practices Now Defend Agentic Surfaces, Not Perimeters

Agentic attack surfaces describe the expanding set of machine identities, autonomous agents, and transient infrastructure that can take action without human approval. And this is the primary focus of cloud-native security practices for most enterprises now.

Firewalls and VPNs were designed around the premise that insiders are trusted, outsiders aren't, and the network boundary separates them. That premise has nothing to do with how modern workloads behave.

A container that spins up for 40 seconds and calls three external APIs doesn't have a persistent IP address to add to an allowlist. An AI agent that reads files, sends emails, and calls external services doesn't fit the traditional network security model at all.

Machine identities have become the real gatekeepers between service calls. Vibe coding, where developers rely heavily on AI-generated code to build Terraform modules, policy definitions, or microservices, introduces structural risk.

AI-generated code still carries a meaningful rate of vulnerabilities before a human reads it. Such risks compound when inventory tracking is incomplete, and telemetry teams collect data that doesn't cover every agent spin-up.

Cloud Security Framework: Why the Perimeter Model Breaks Down for AI Workloads?

The old perimeter was defined by network topology. Cloud-native environments are defined by workload identity. Those are two entirely different design philosophies, and mixing them produces security gaps that are invisible to traditional monitoring tools but obvious to anyone who maps how an AI agent actually moves through a system.

The implication for security architecture is that cloud-native application security now requires identity-first controls at every layer, not network controls layered on top of an application as an afterthought.

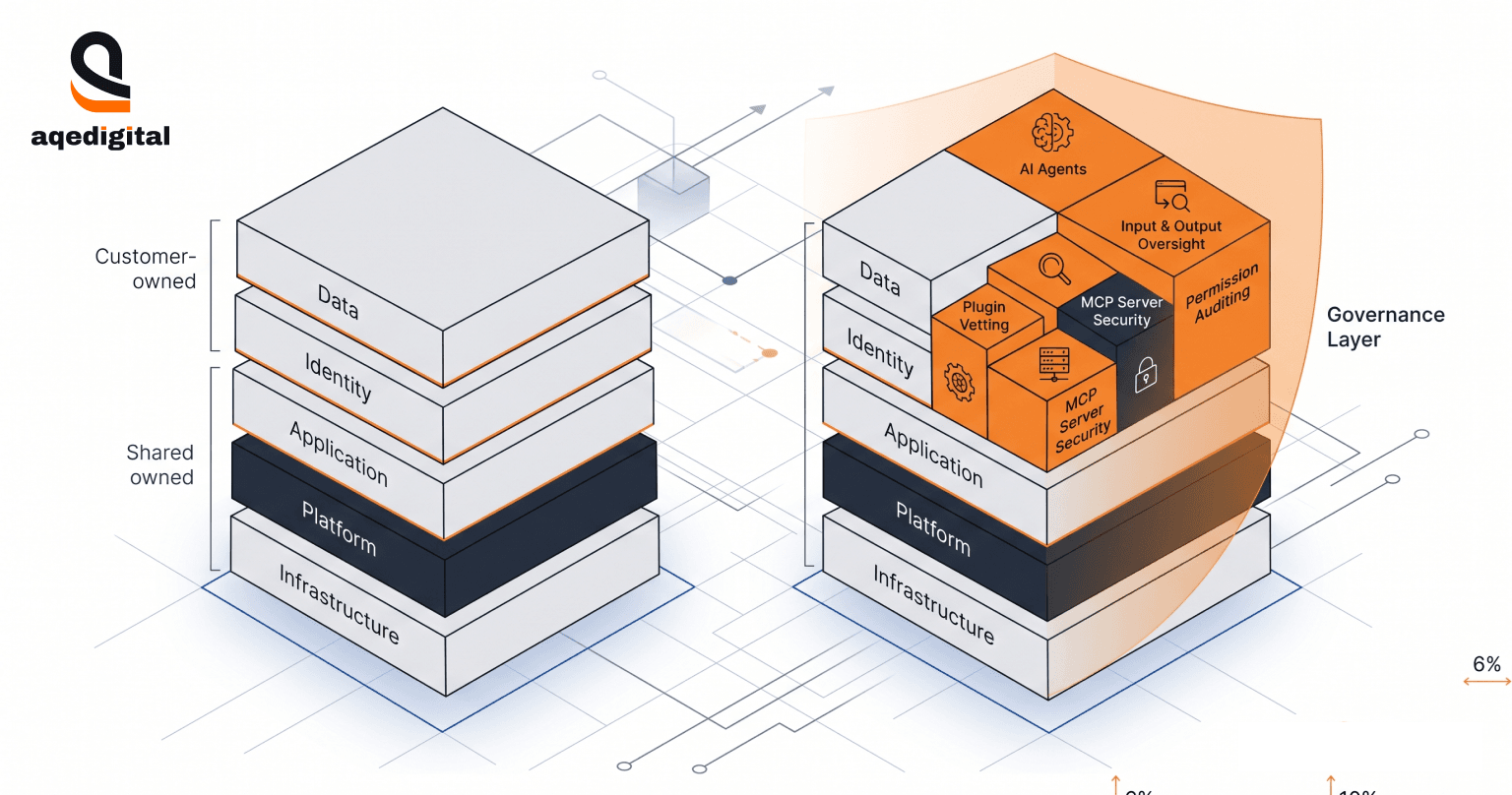

AI Agent Governance Reshapes the Shared Responsibility Model in 2026

AI agent governance reshapes the Shared Responsibility Model by requiring customer teams to own AI inputs and outputs, agent permissions, plugin vetting, and the MCP servers those agents call, per the CSA State of Cloud and AI Security in 2026 update. In 2026, the scope of the customer's responsibility under the existing model has expanded significantly to include AI agents, inputs, and plugin vetting.

The Shared Responsibility Model has always required customers to manage their data, identities, and applications while providers handle the underlying infrastructure. What changed is the scope. Every AI agent a team deploys becomes a customer responsibility, its permissions, its training data inputs, the marketplaces it pulls plugins from, and every MCP server it connects to. That's a governance surface most procurement and engineering teams didn't have to care about two years ago.

The structural challenge is that alignment is fragile when pace trumps oversight. If an engineering team ships new AI capabilities faster than security can audit the agent permissions involved, the governance model breaks down quietly.

Cloud Security Strategy: Building the AI Partner Governance Layer

AQe Digital Cloud Consulting services add a governance tier to every cloud security engagement, specifically to address the ownership gap identified by the CSA model. This means defining which agents are sanctioned, which aren't, and who owns the audit trail when they make decisions.

That sounds straightforward. In practice, procurement, engineering, and security must agree on a shared taxonomy before any agent goes to production, and that alignment is harder than the technical controls that follow.

AI Agent Governance: Locking Zero-Trust Architecture on Every Machine Identity

Zero-trust architecture requires every actor, human or machine, to prove its identity and intent before the system authorizes a data or credential transfer, per the CSA State of Cloud and AI Security in 2026 update. Applied to AI agents, this means every machine identity becomes an explicit decision point before data flows, not an implicit trusted participant because it was deployed internally.

Cloud-native security platforms that implement zero trust for machine identities rely on continuous verification: short-lived credentials, context-aware policy evaluation, and behavioral baselines that flag deviations. The execution challenge is that telemetry is still frequently fragmented across SaaS teams, creating gaps between the zero-trust narrative many organizations publish and the enforcement plane they actually operate on.

Cloud-Native Security Practices Treat OpenClaw as the Proof Case for Agentic Risk

OpenClaw is an autonomous AI agent that can execute shell commands, read and write files, call external APIs, send emails, and manage calendars. That combination of capabilities makes it a clear example of what security researchers now call the “Lethal Trifecta.”

These include untrusted inputs, privileged access, and outbound capability operating in the same agent. Many users also flagged a significant share of publicly listed ClawHub skills as malicious, indicating that the marketplace itself is an attack vector.

The reason this matters for cloud-native security practitioners isn't that OpenClaw is uniquely dangerous. It's representative. The same architecture pattern, an AI agent with broad permissions, plugin access, and outbound connectivity, is becoming a standard production configuration across many enterprises.

OpenClaw just made the exposure visible at scale before the industry had governance frameworks in place to handle it.

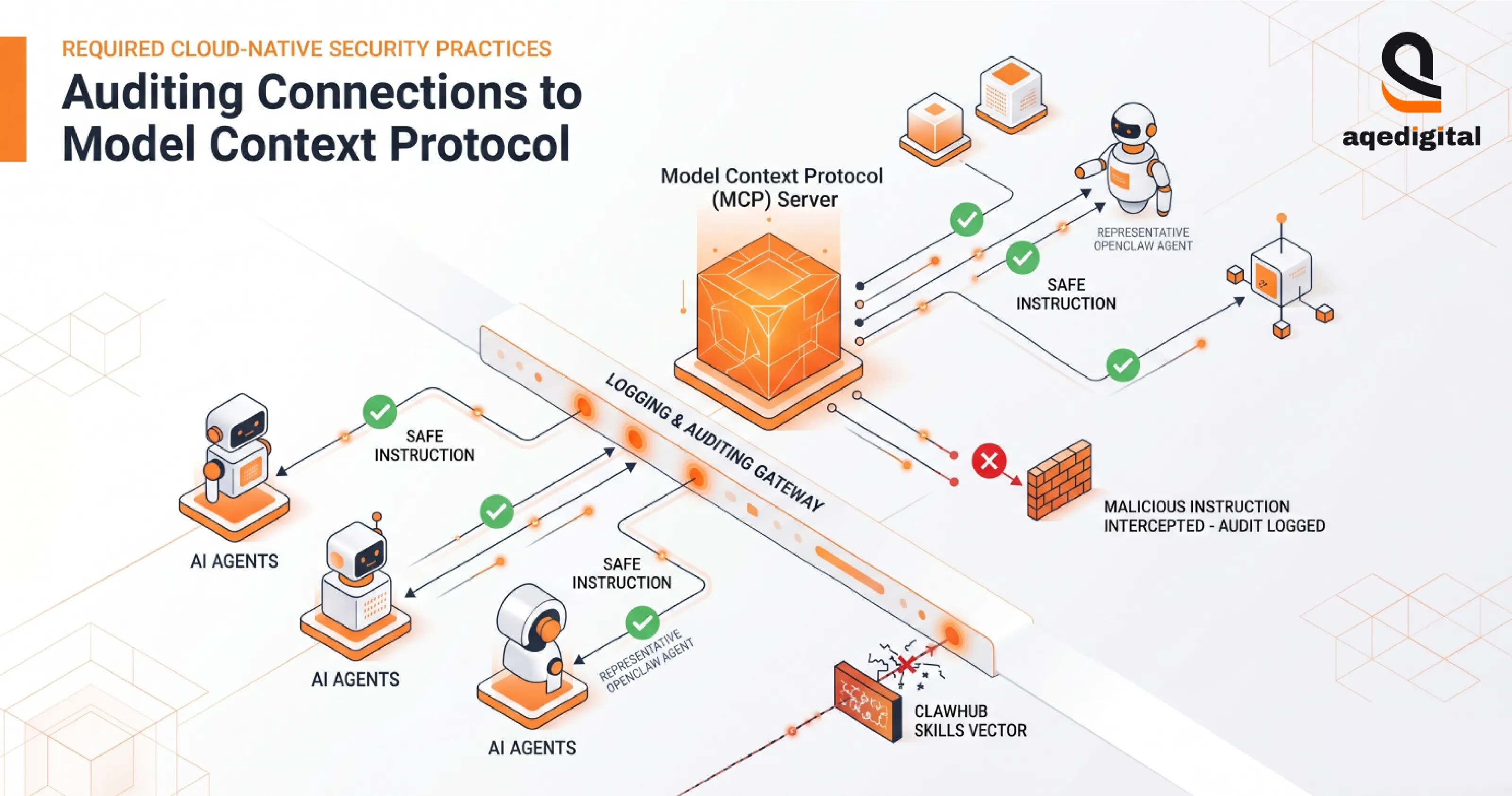

AI Agent Governance: Auditing MCP Servers, Supply Chain, and Federated Memory

Model Context Protocol servers connect LLMs, databases, and APIs, and an exposed MCP endpoint is the vector that allows an attacker to push malicious instructions to every downstream agent that queries it. Cloud-native security practices that take this seriously require logging and auditing every MCP server access before an agent is permitted to proceed to another instruction cycle.

Addressing AI-Generated Misconfigurations and MCP Exposure

AI-generated misconfigurations are the quiet operational risk that cloud security teams haven't yet fully prepared for. Vibe coding accelerates delivery cycles, but it can bypass standard code reviews and directly push policy flaws into production before a human catches them. This is why it becomes crucial to partner with a custom software development service provider to ensure proper AI-generated code reviews.

The OWASP LLM Top 10 formalizes five new vectors that cloud-native teams now need to account for, especially when dealing with the AI-generated code. These are prompt injection, guardrail bypass, context poisoning, and related attack classes.

The MCP exposure problem runs in parallel. The same scans that surface vulnerable agent runtimes reveal publicly accessible MCP endpoints that no one on the security team owns or monitors. Those aren't edge cases. They're the predictable result of shipping AI capabilities faster than governance frameworks' scale.

Cloud-native security solutions in this category include,

- CSPM for posture monitoring

- CWPP for runtime protection

- CIEM for identity governance

- CNAPP for platform-level visibility

- AI-SPM (AI Security Posture Management) category for agent-aware risk monitoring

These tools, however, lose effectiveness when the policies they enforce still reflect a risk appetite defined before AI agents became part of the production stack. Tooling is only as good as the threat model driving it.

Cloud-Native Security Practices Rely on the 3 Rs Plus Restrict and the 4 Cs

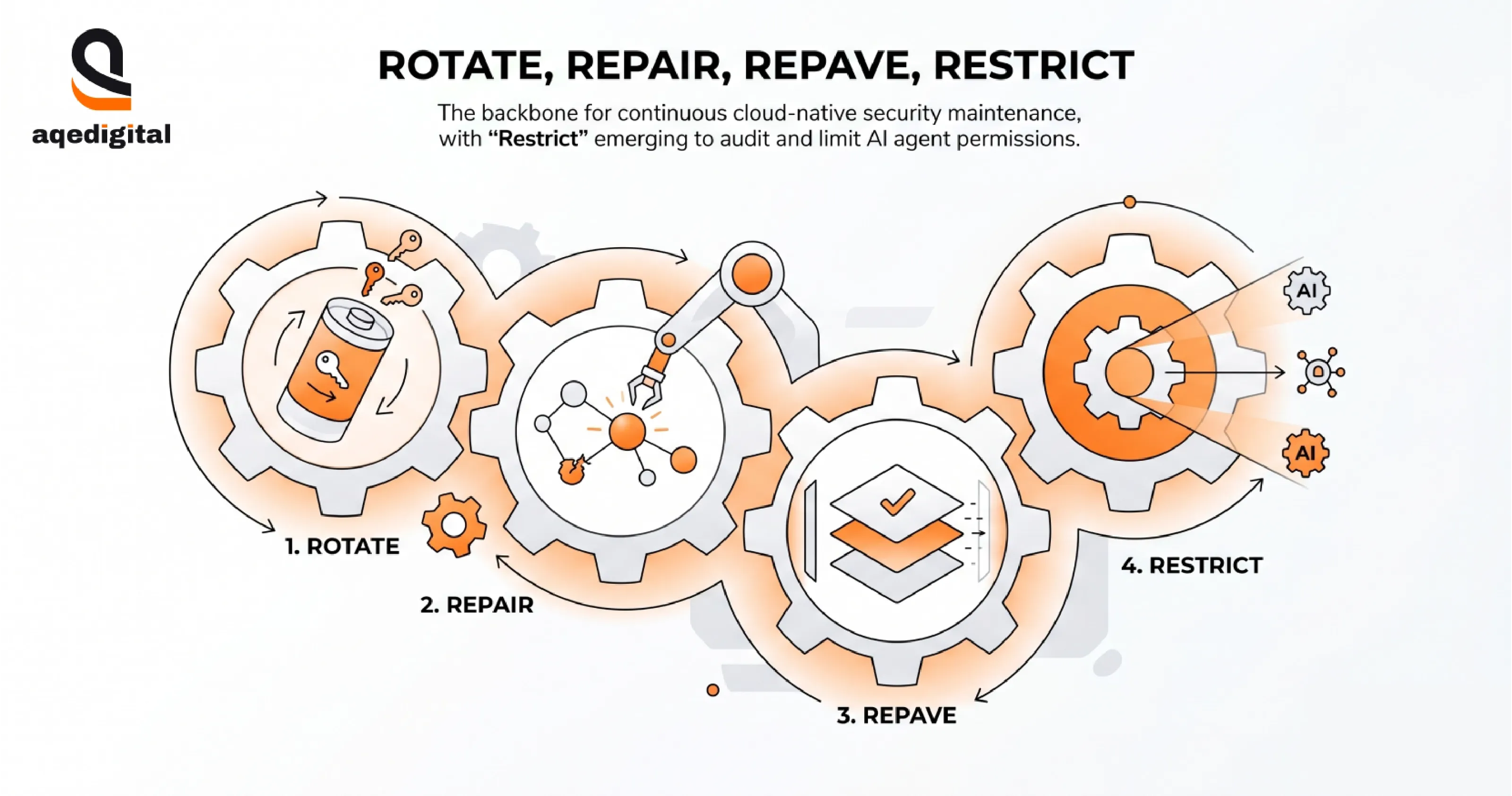

The 3 Rs of Cloud-Native Security Practices

Rotate, Repair, and Repave remain the operational backbone of cloud-native security maintenance: rotating secrets so stolen tokens expire quickly, replacing vulnerable components rather than patching in place, and rebuilding infrastructure from verified clean images on a regular cadence, per Digacore's 4 Cs primer.

In 2026, a fourth R has emerged from practice: Restrict. This means continuously auditing AI agent permissions and removing anything that behavioral data can't justify on a business basis.

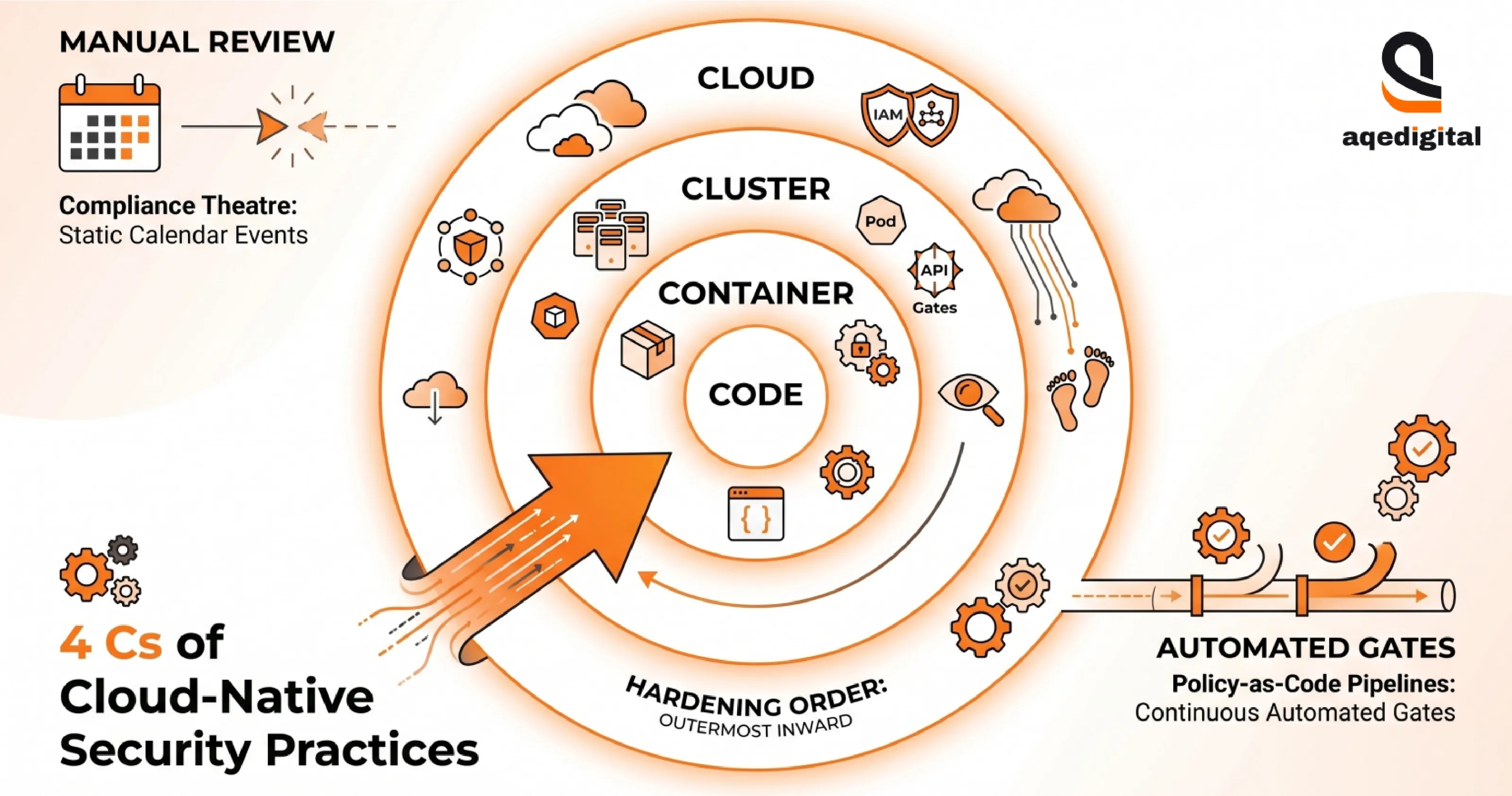

The 4 Cs of Cloud-Native Security Practices

Cloud, Cluster, Container, and Code are the 4Cs of cloud-native security practices, which provide the architectural frame for where those controls live. Each layer depends on the one outside it, so hardening must move from the outermost ring inward: cloud-level configurations first, then cluster policies, then container security, then code-level guardrails.

Cloud native application security depends on this framework being embedded in policy-as-code pipelines, not enforced manually through periodic reviews. Whether you are managing a single cluster or executing a broader digital transformation strategy across multi-cloud footprints, the moment these controls become calendar events rather than automated gates, they stop functioning as security controls and become compliance theatre.

Cloud Security as a Service: Making the 4 Cs Operational for Enterprise Teams

Most enterprise teams don't struggle to understand the 4 Cs framework. They struggle to operationalize it at the pace of their cloud footprint's growth. Cloud security as a service models address this by embedding policy enforcement, drift detection, and agent governance into a managed function rather than requiring internal teams to maintain it continuously.

That approach only holds when the managed function has visibility into every AI agent touching the environment. Partial coverage is often worse than no coverage because it can lead to false confidence in the governance posture.

Cloud Native Security Tools: Matching the Stack to the Threat Model

Cloud native security tools work best when they're selected against a specific threat model rather than a vendor feature matrix. For organizations running autonomous AI agents, the priority stack is,

- AI-SPM for agent visibility

- CIEM for machine identity governance

- CSPM for posture drift.

- Runtime protection via CWPP.

Investing in all five tool categories without integrating them into a unified detection workflow leads to dashboard proliferation rather than security coverage.

What Enterprise Teams Do Next with Cloud-Native Security Practices?

Start with an inventory workshop. Every AI agent, MCP server, and RAG memory store in the environment needs to be cataloged before governance controls can be applied. This is tedious operational work, and it's the most common gap in otherwise sophisticated security programs.

Teams lacking internal bandwidth frequently rely on external AI development services to baseline their agent permissions and conduct this initial inventory. Teams that skip it tend to discover their blind spots when a researcher publishes an exposure analysis.

How Enterprises can Leverage AQe Digital for Cloud-Native Security in the AI era?

Cloud-native security practices only hold when agent governance is treated as a strategic control function, not a compliance afterthought. Untrusted inputs, privileged access, and outbound capabilities are the default architectural patterns for AI agents in production.

The teams are getting ahead of this run by holding agent-inventory workshops before selecting tooling. They embed the 3 Rs plus Restrict into release cycles. They measure the 4 Cs at every layer. The rest learn of their exposure from a third-party researcher rather than from their own monitoring.

If your cloud security framework hasn't accounted for machine identity governance and MCP server exposure, that's where to start.

AQe Digital begins with an agent catalog. This includes every autonomous tool, every MCP server connection, and every RAG memory store in your production environment.

That catalog drives zero-trust policy enforcement, least-privilege configuration, and AI-SPM monitoring grounded in how the environment actually operates today.

The difference isn't a framework. Any consultancy can publish one. AQe Digital brings cross-industry pattern recognition from cloud-native engagements across manufacturing, enterprise software, and regulated sectors, combined with the technical depth to ship policy-as-code within your release cycle, not after it.

For CTOs and CISOs building their 2026 security roadmap, the question isn't whether AI agent governance belongs on it. It does. The question is whether the team building it has seen this architecture fail under delivery pressure before.